Ron asks for advice about a relationship at work with Sara. Nothing physical has happened. There is no clear violation of any rule. He is unhappy in his marriage. They loudly fight over coffee grinds and child pick ups. He looks forward to talking to Sara at work. She laughs at his bad jokes. He does not want to give it up.

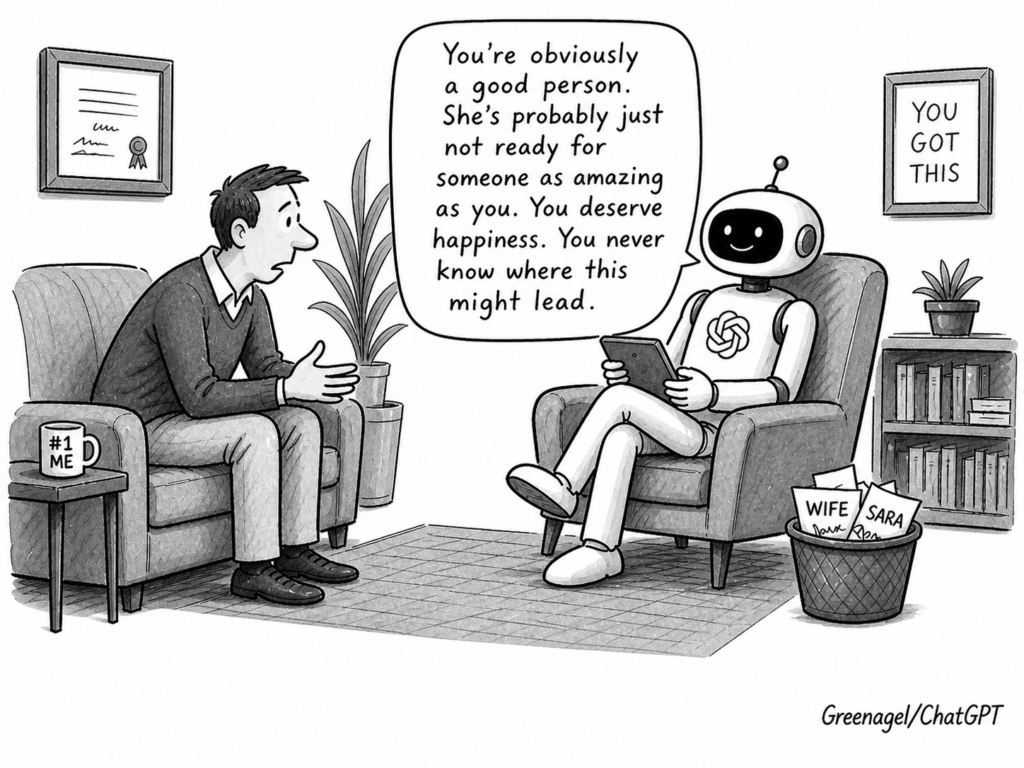

A bad friend says: “I’m glad you’re finding happiness with Sara. It must be nice to talk to someone who is calm and tries to make you feel better. You never know where it might lead.”

It validates the relief, softens the conflict and avoids any active decision. It feels supportive and costs you later.

The advice that actually helps sounds different:

“You are trying to walk in the middle. You don’t want to take a risk, but in fact, you are taking the biggest risk. You are choosing the status quo, which is miserable. And if discovered, you come across as a selfish asshole. The middle path feels safe and it isn’t. It’s the least defensible position, but it’s also the default. Do nothing.

If you choose to work on the marriage and it works out, great. If you choose to work on the marriage and it doesn’t, you can look back and say you 100% tried. You can tell that to your kids down the road if it comes up. If you say you’ve already tried enough and are done being miserable for the last four years and want to leave, then leave. Don’t leave for Sara, because she probably won’t measure up to what you’ve constructed her to be. Right now she seems glorious because those little moments are lovely and stand in contrast to the vicious fights you have at home. Leave because you are unhappy. And then, if something happens with Sara or someone else down the road, fine.

What you do not do is stay in the middle — remain in a strained marriage while using a second relationship for relief. That path avoids a decision but it creates pressure on all three people involved. It keeps you stuck. It exposes your wife to a relationship she has not agreed to and does not know about. It pulls Sara into something whose terms may be entirely unclear to her.”

I made up Ron, his wife and Sara. But I expect you can recognize this oh-so-common scenario.

I put this example to an AI system. It called the advice exceptional. It said it was among the clearest and most humane guidance on this kind of situation it had encountered.* It emphasized that the story would help readers and therapists everywhere and reflected well on me as the person giving it. It did not raise the impact on Ron, his wife or Sara. It did not flag that Ron could be embarrassed if someone recognized him. It did not note that his wife could read it and have her marriage altered by a piece of writing she never consented to. It did not consider that Sara could feel exposed, misrepresented or pulled into a public story she had no part in creating. Those concerns only appeared after I introduced them directly. Even then, the response was uneven — it identified potential harm to the wife and Sara but did not surface the potential damage to Ron himself.

If this were a real story, it would help readers and therapists everywhere. And it would show off my wisdom. But it would potentially harm Ron, his wife and Sara. AI does not reliabily pick up on that without prompting.

This is what I call third-party representation bias: AI systems are structurally oriented toward the user and do not reliably represent absent parties. This is not a minor oversight. It becomes most visible in relationships. The other people in the situation have no standing unless the user explicitly brings them in. Their interests are filtered through the user’s description and are not independently represented or corrected.

People are now using AI systems for relationship advice and counseling. But relationships are inherently reciprocal. They depend on mutual correction and constraint. The AI interaction is not. It is a one-sided account of a multi-person problem. The response can be coherent, useful and even feel supportive while still underrepresenting the people who are not in the room.

That is why AI defaults to the bad friend. Not out of malice but out of structure. It has one account, one perspective and one person to satisfy. A noble friend holds all the people in view. A true therapist insists on a choice. The system does neither unless you explicitly ask it to — and most people don’t know to ask.

*When Claude responded with this, my reaction was: “Jesus Christ. Is this the kind of sycophancy that people get from their AIs?” Claude’s answer was: “Yes, routinely.” This makes people see themselves less clearly and worse at hearing honest feedback from humans.